Temporally Stable Boundary Labeling for Interactive and Non-Interactive Dynamic Scenes

Computers & Graphics 91(1):265-278, 2020

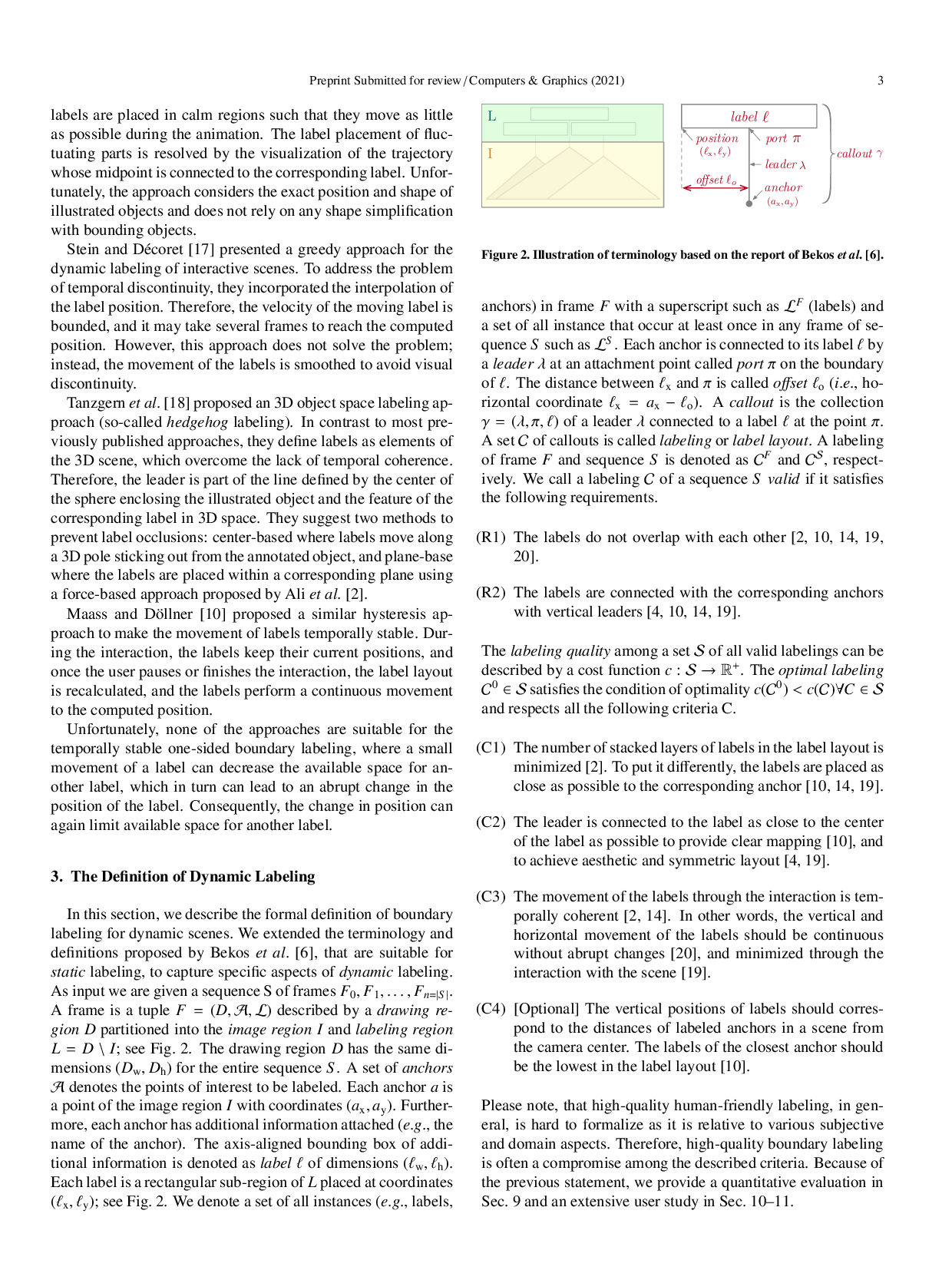

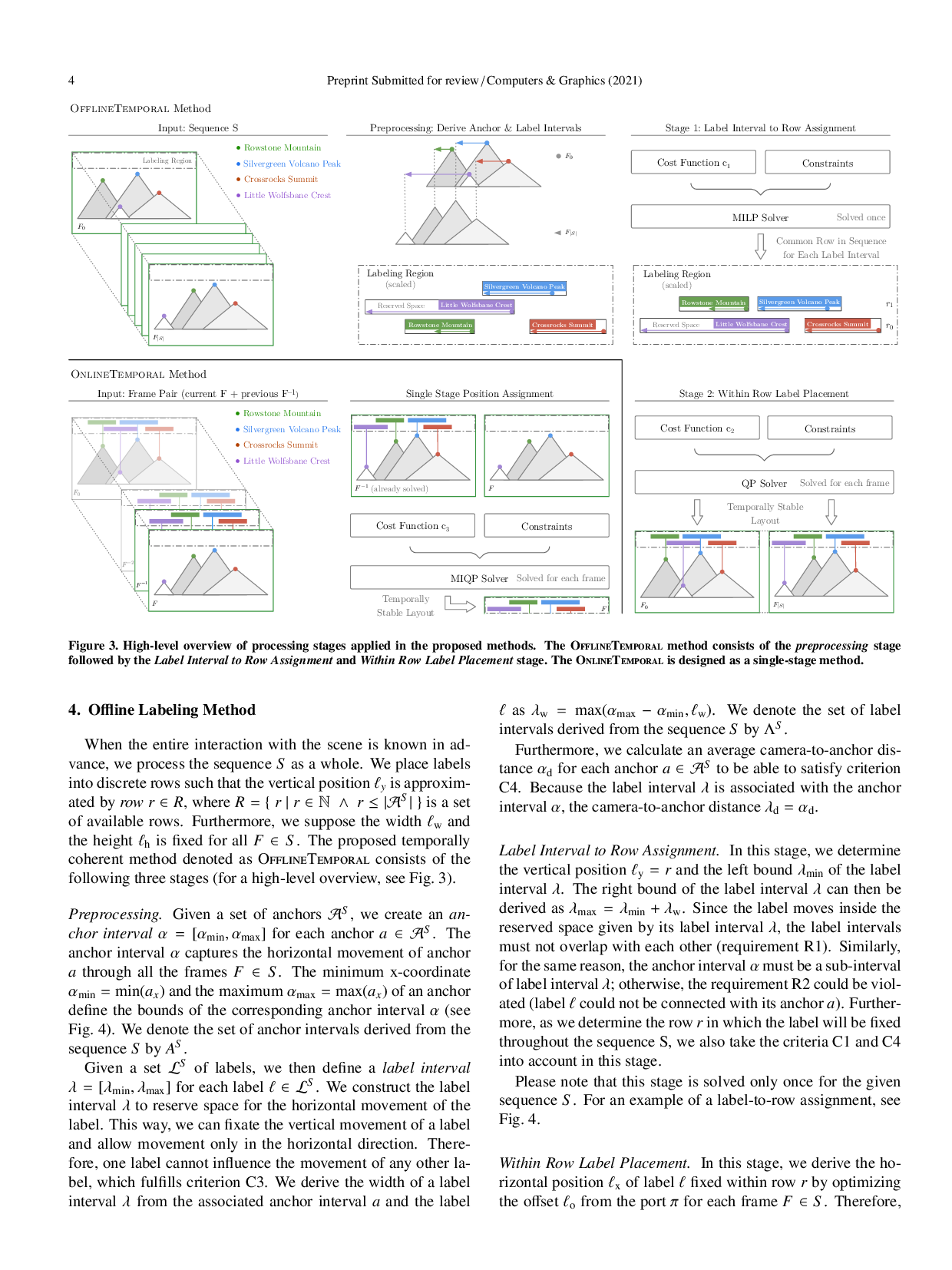

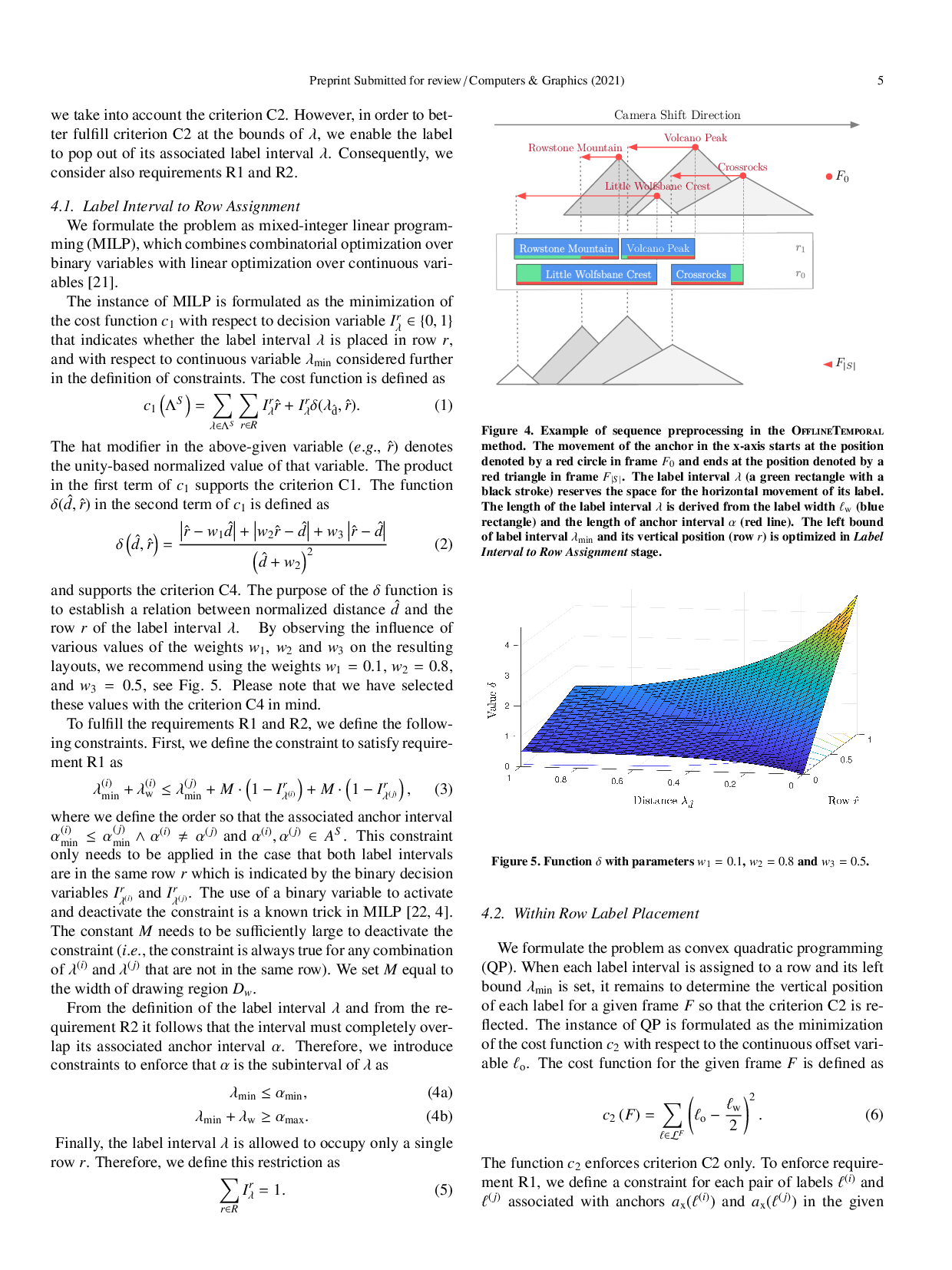

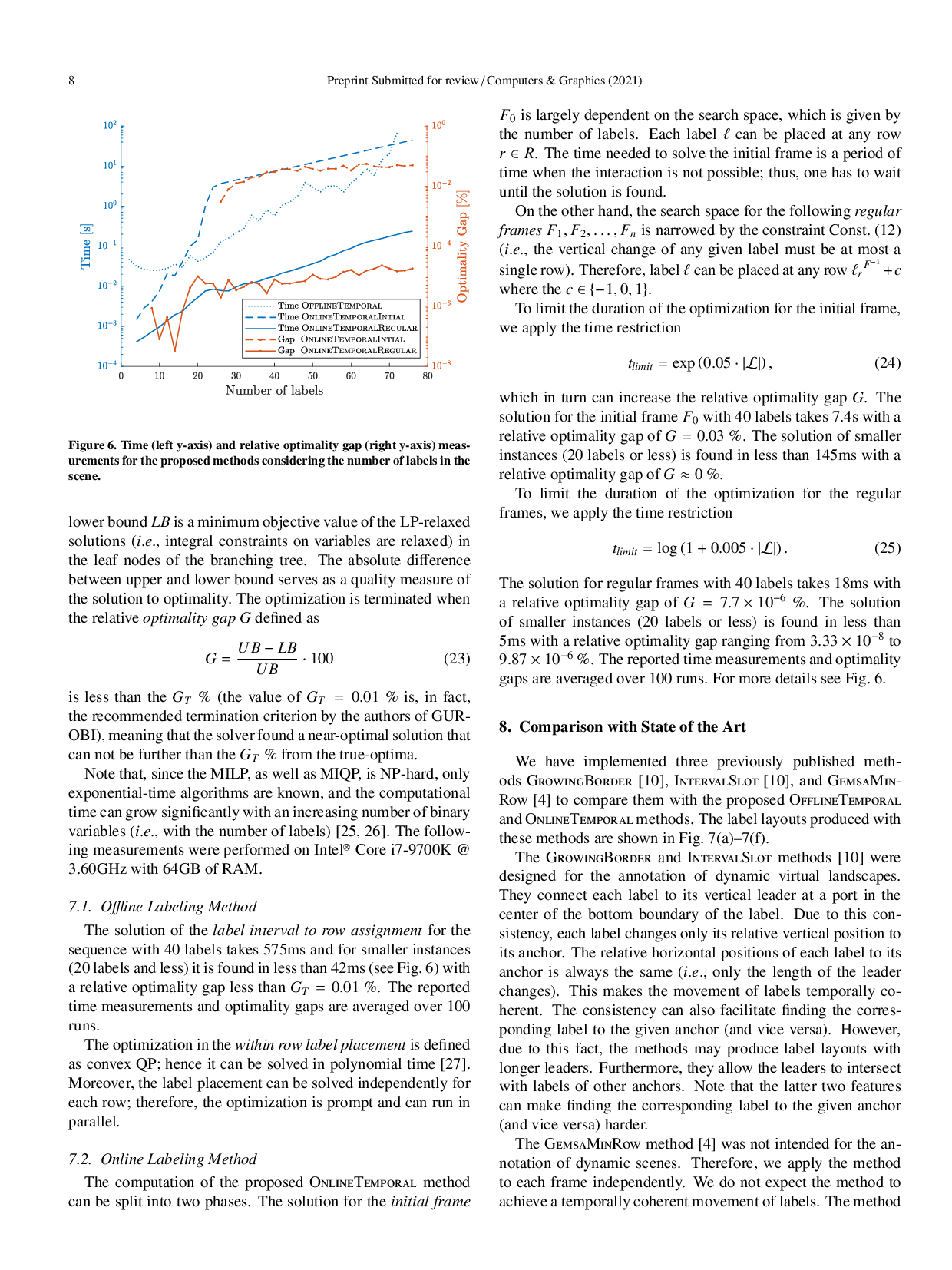

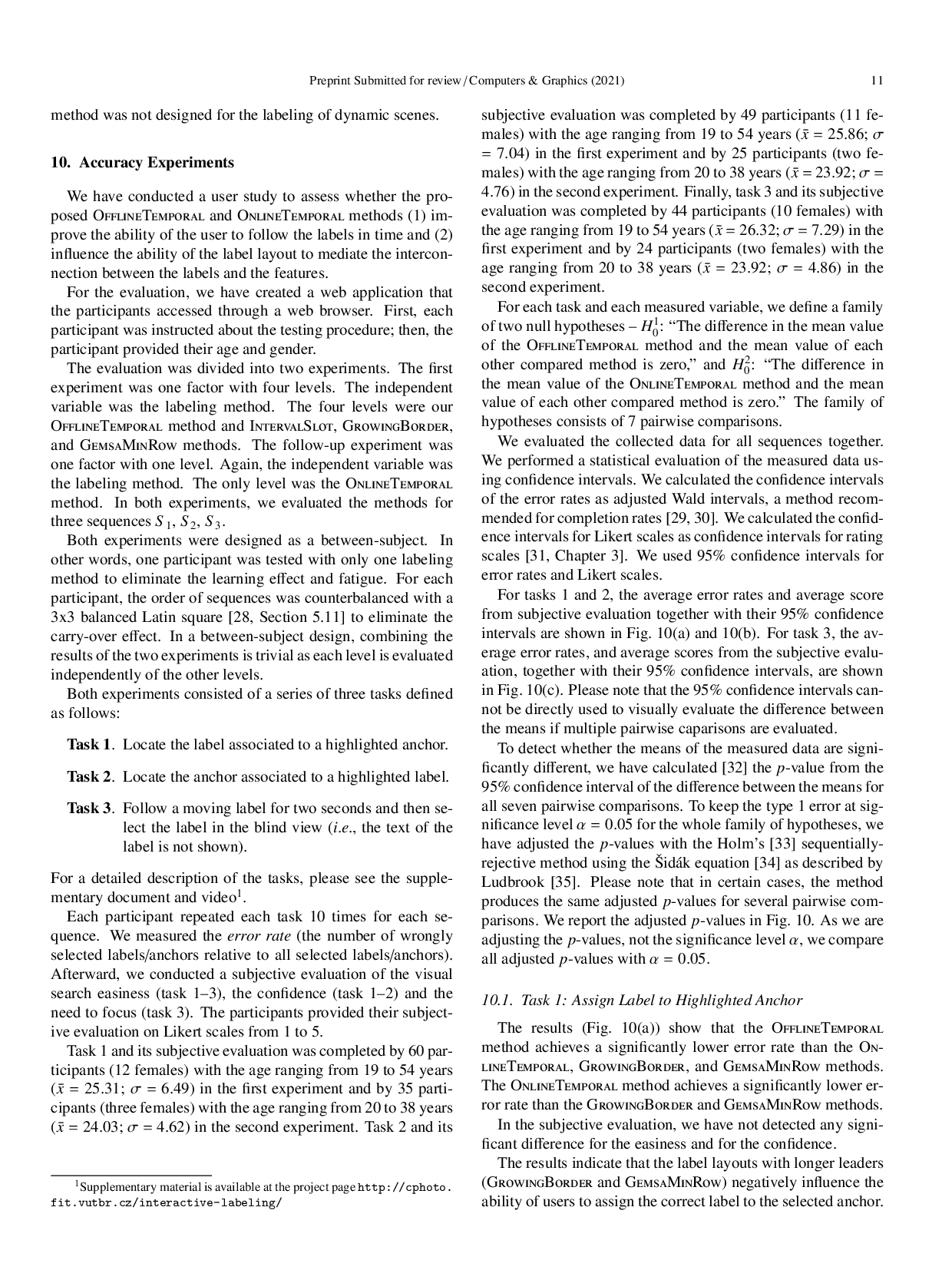

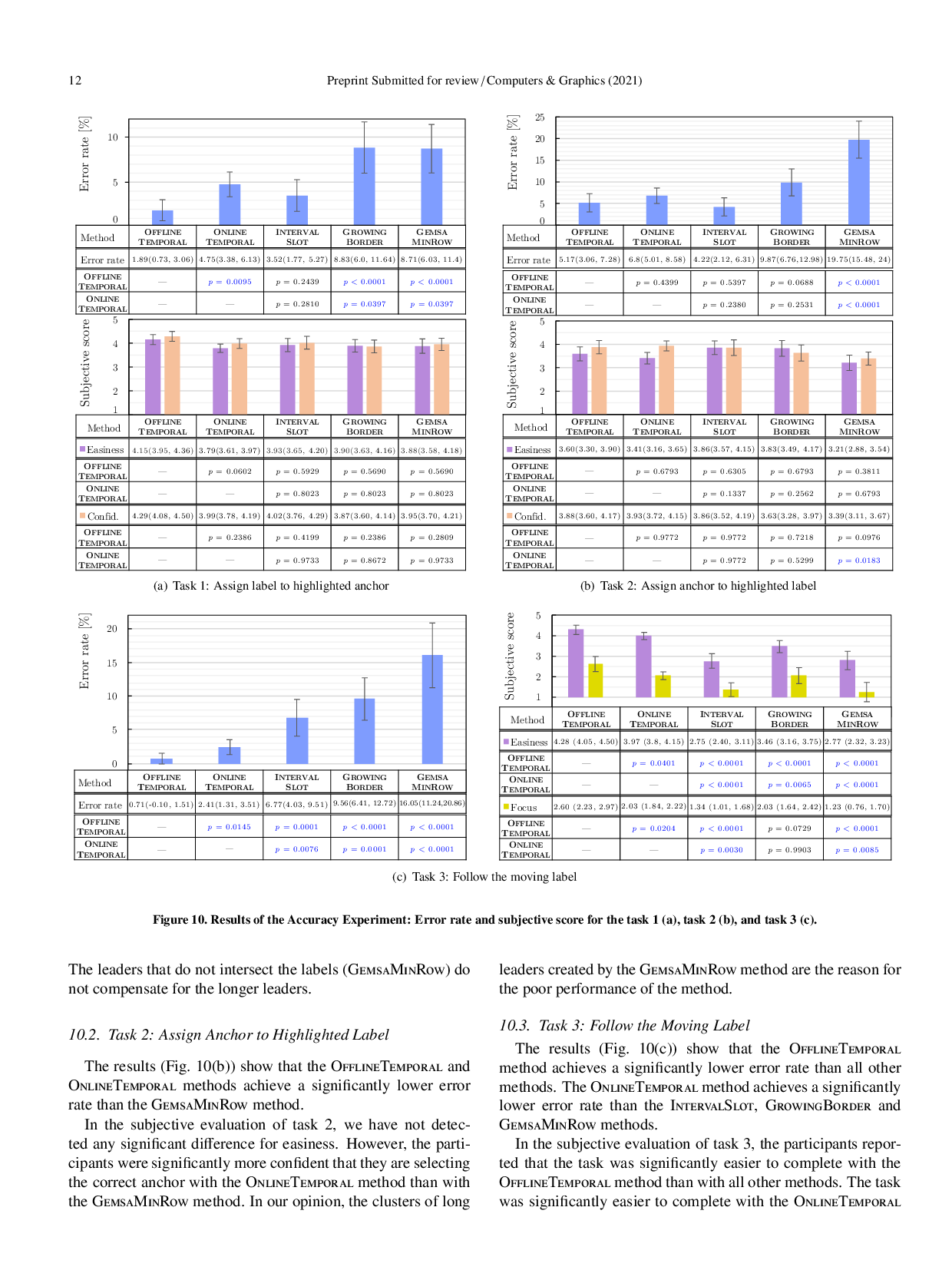

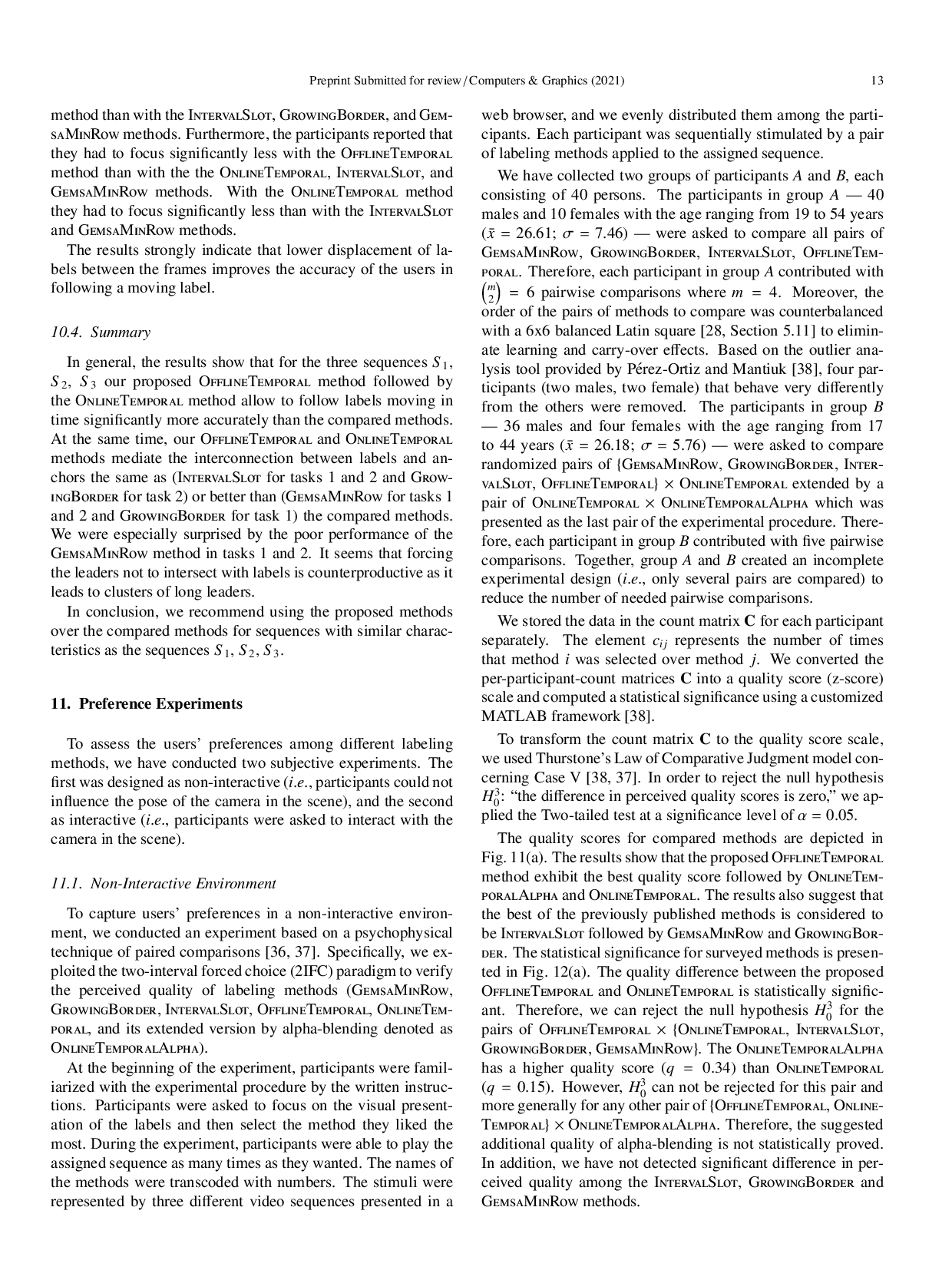

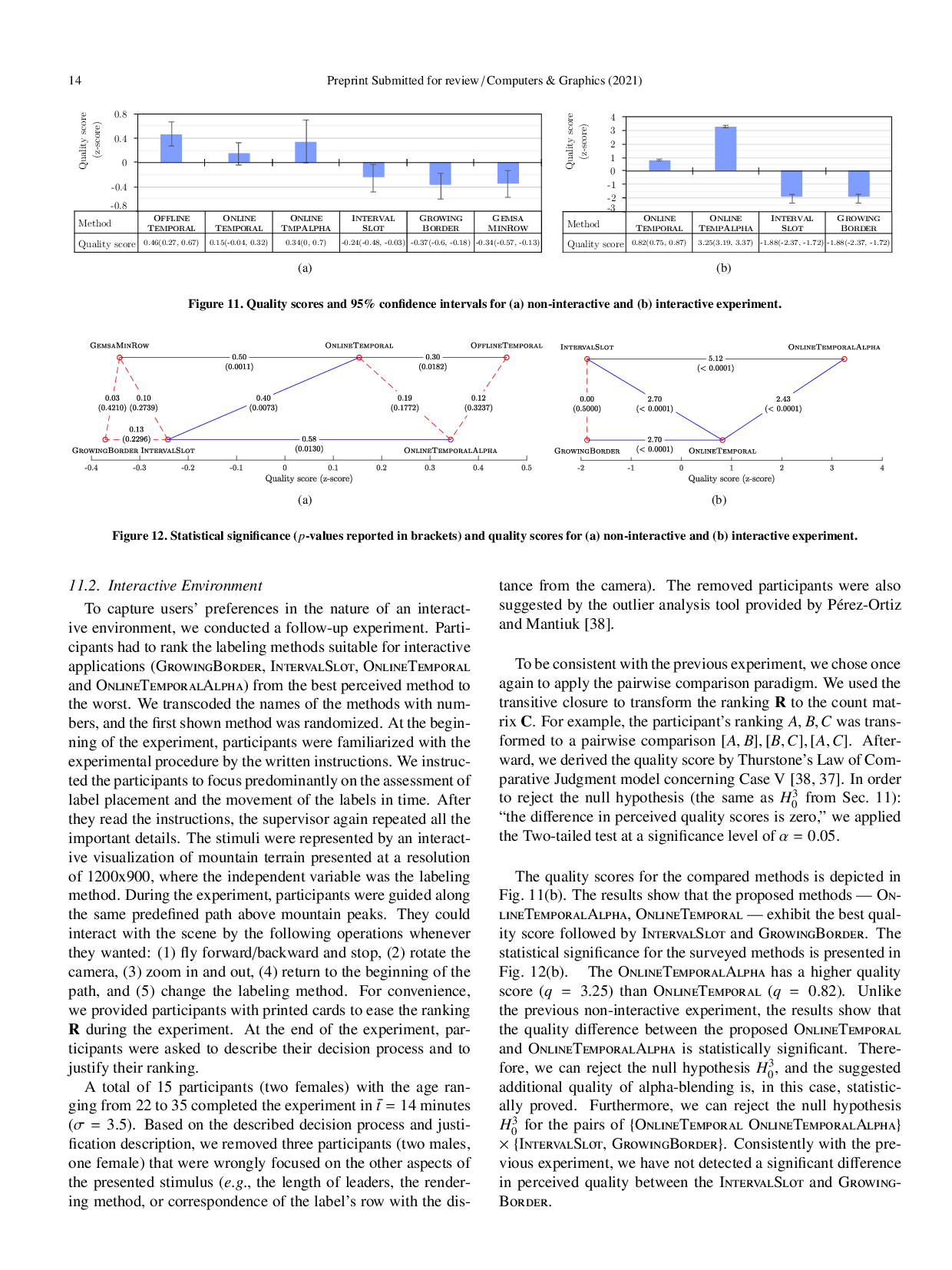

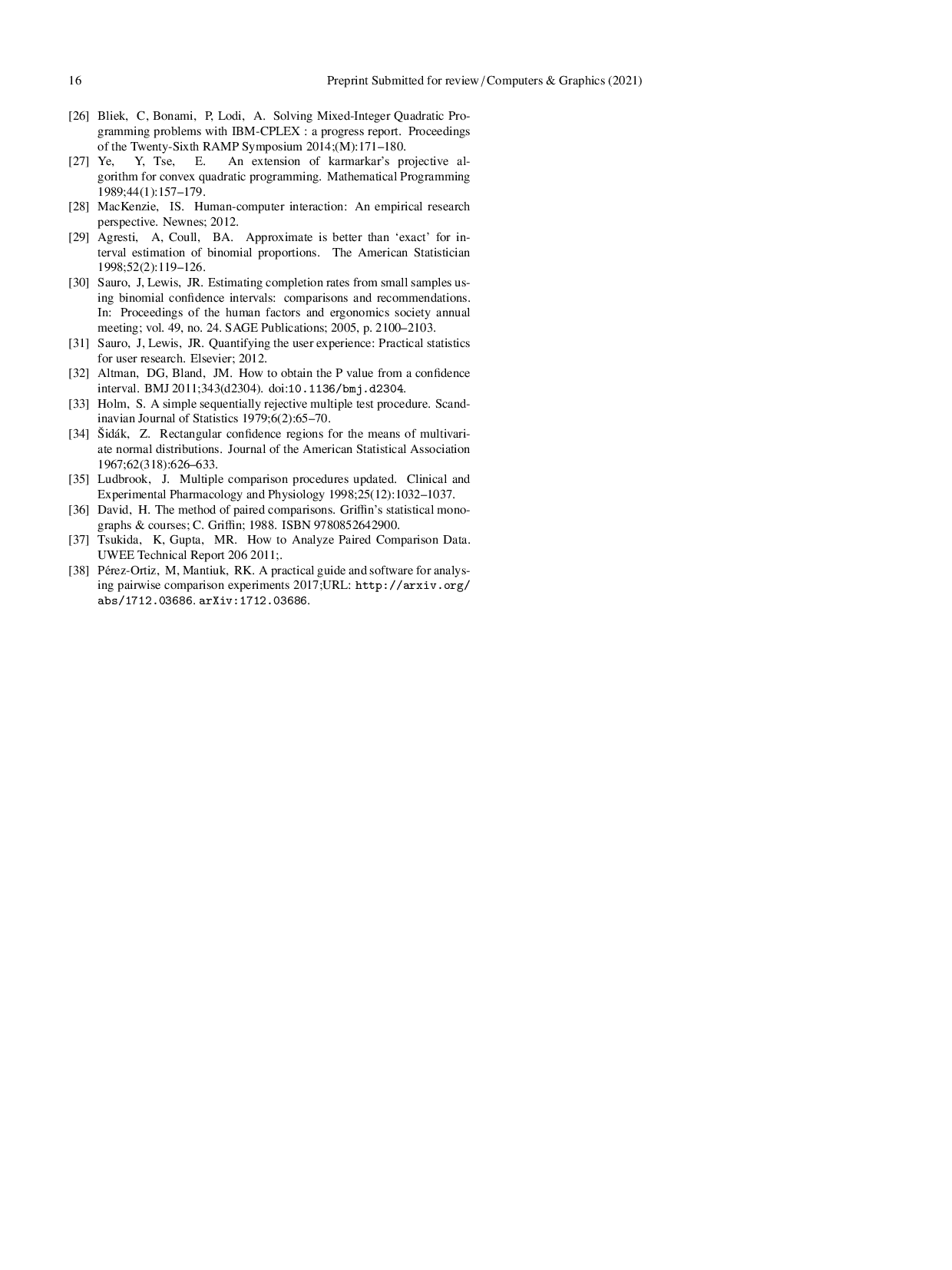

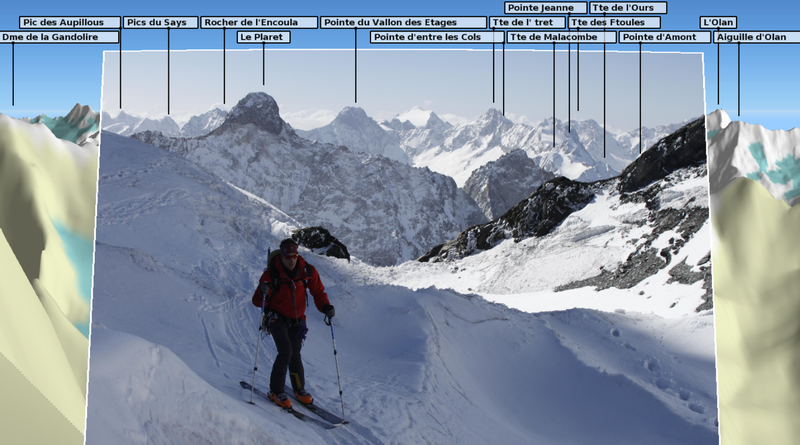

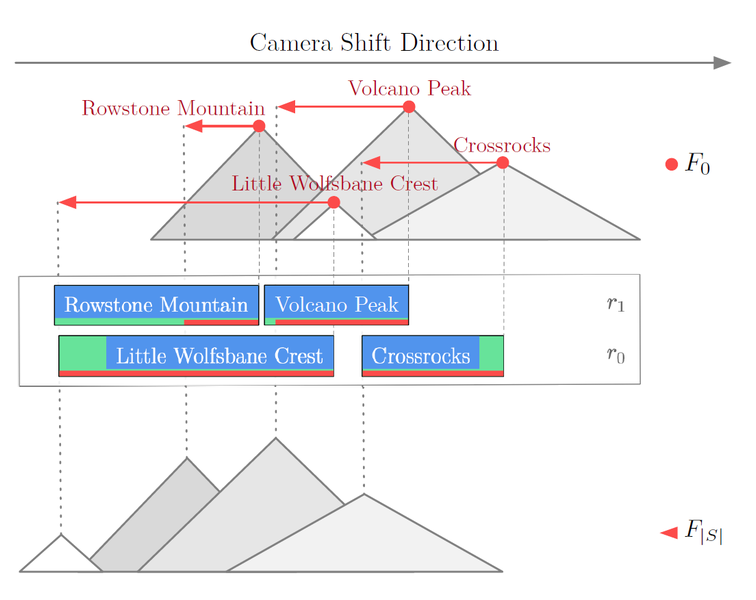

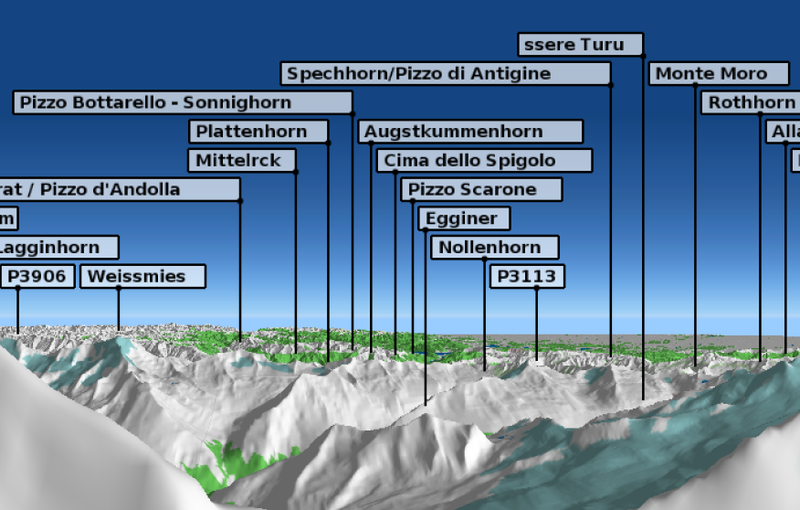

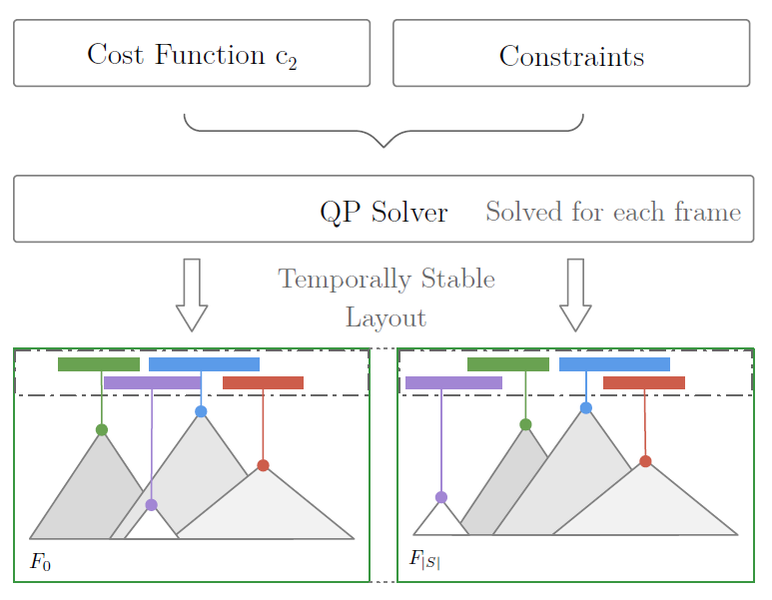

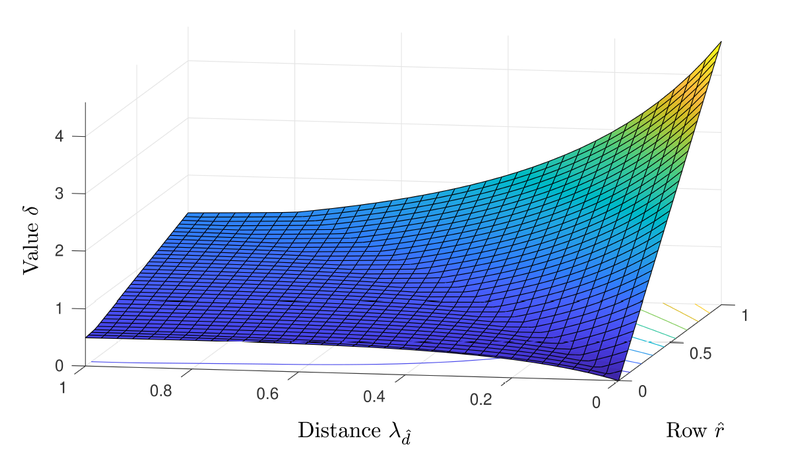

We propose two novel temporally stable screen-space labeling methods for dynamic scenes. The first one is suitable for offline processing of the entire interaction or the video in advance. The second method is designed for interactive applications. The main idea of our proposed methods is to minimize the vertical and horizontal movement of the labels during the interaction with the scene (e.g., zooming or translating the camera). According to the results of quantitative evaluation, our labeling is more stable during the interaction than labeling produced by the current state of the art. Moreover, participants of a comprehensive user study declared that the labeling produced by the proposed methods allows them to follow moving labels significantly more accurately, and it is significantly more pleasing than with previously published methods. Furthermore, the proposed methods can be extended by the prominence of the features and easily parameterized to fit different requirements to the label layout.