Vzdálené realistické zobrazování pro VR a mobilní zařízení

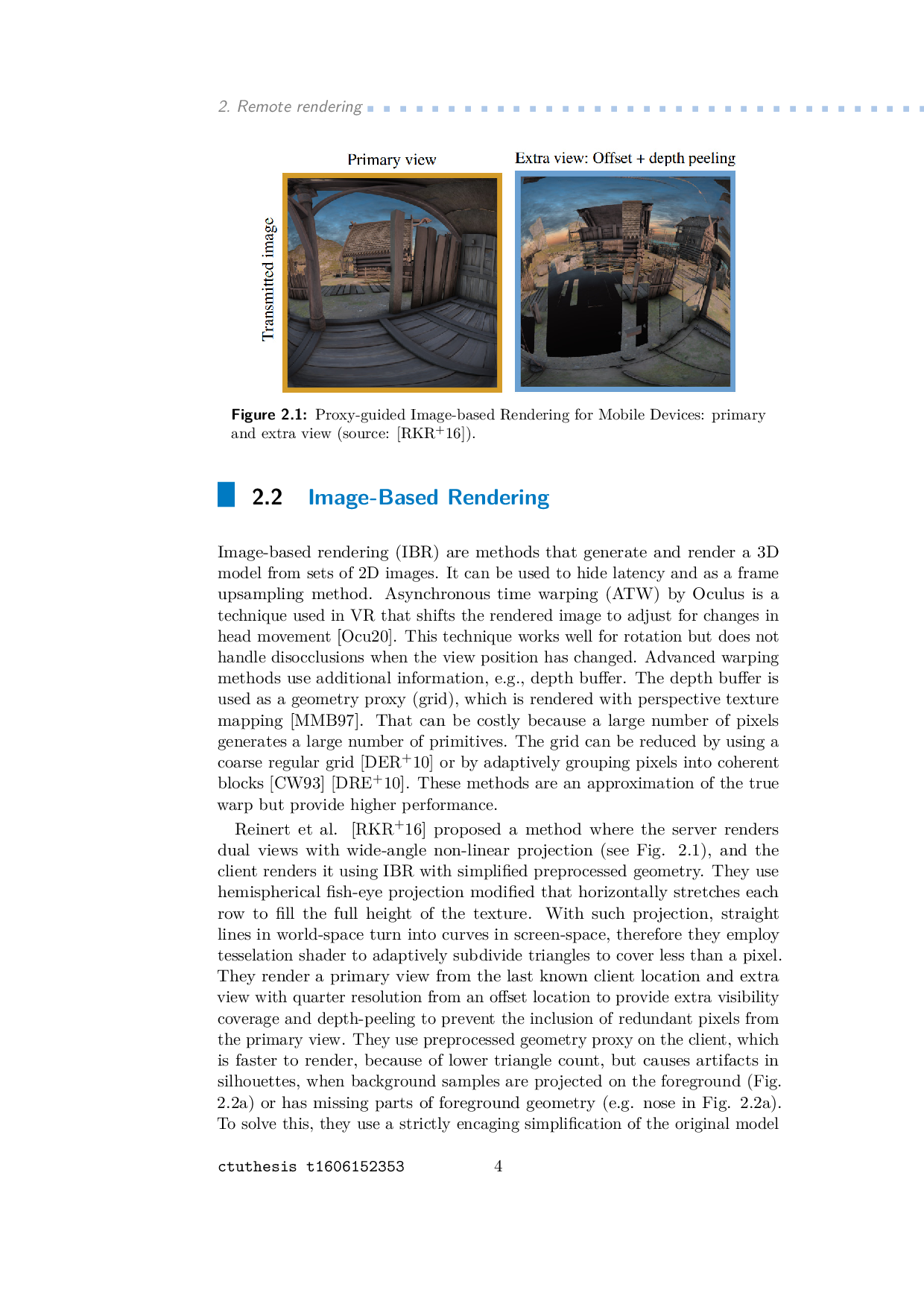

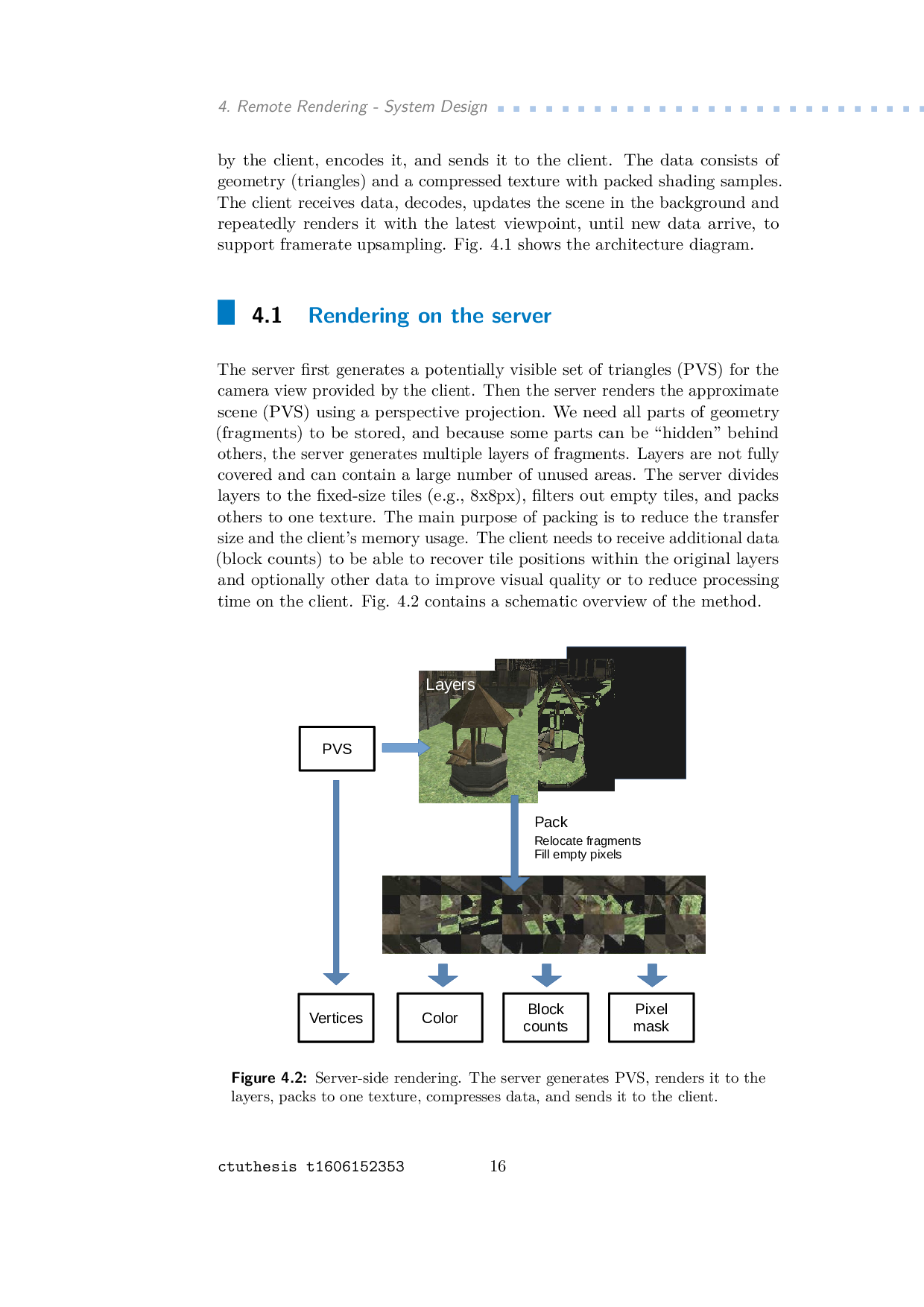

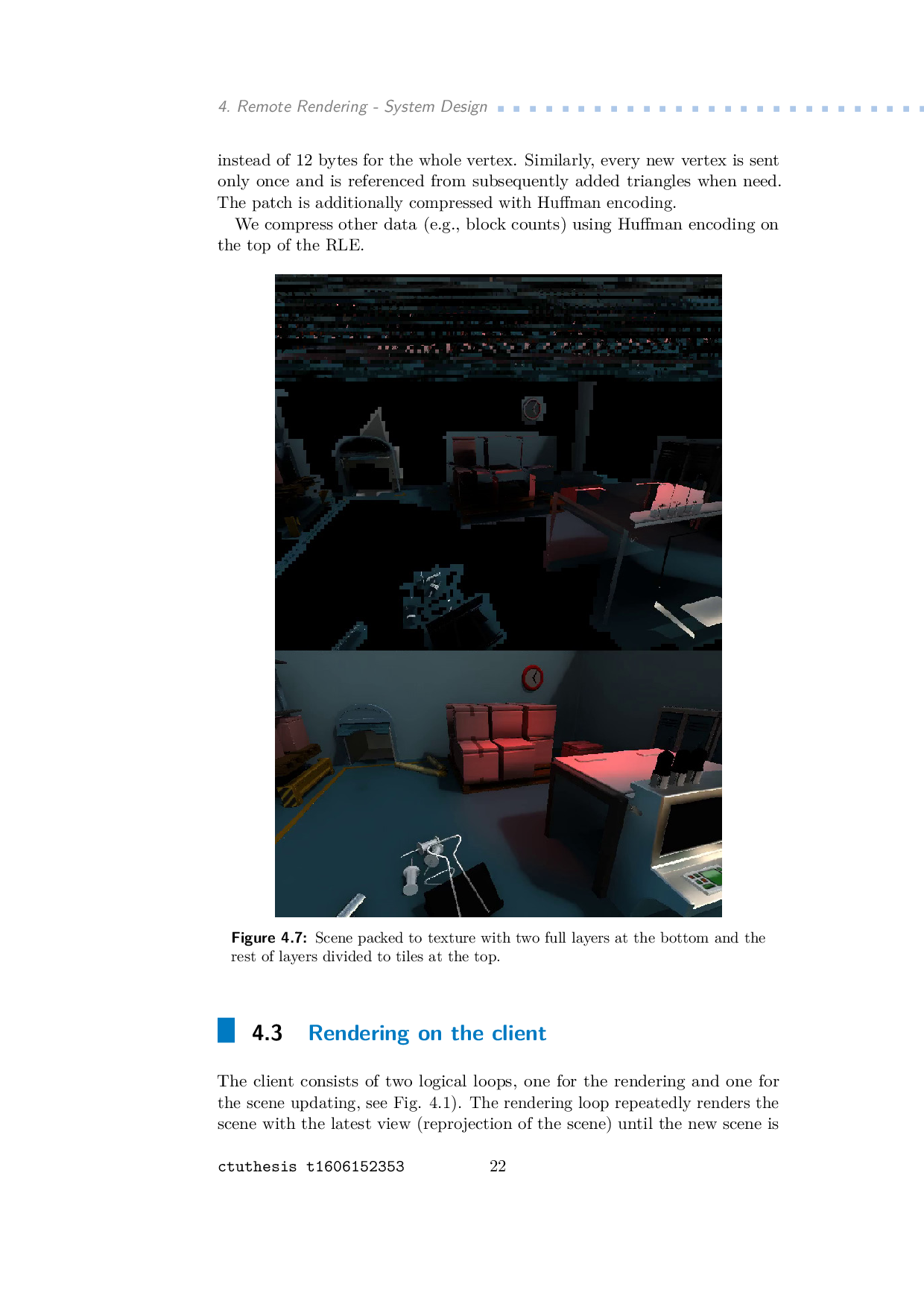

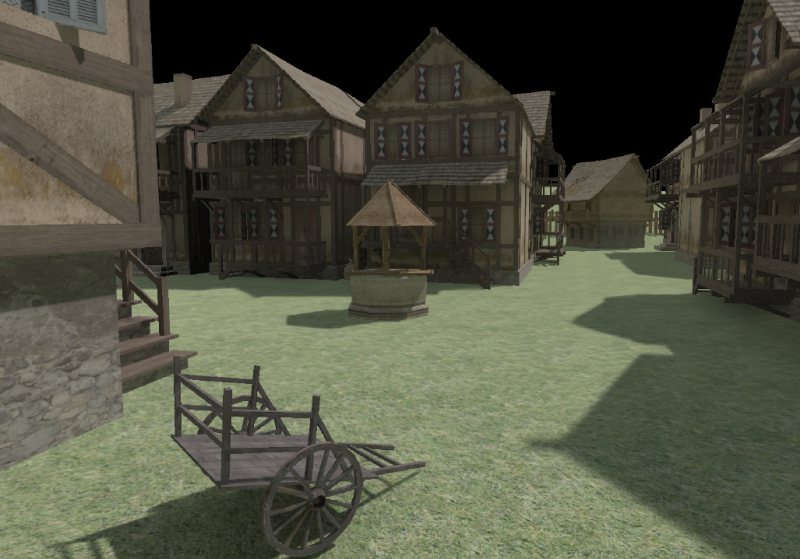

Klasické VR poskytujú vysokú kvalitu vizualizácie, ale obmedzujú pohyb užívateľa, keďže sú pripojené k PC káblom a mobilné VR majú iba GPU s relatívne nízkym výkonom. Cieľom práce je dosiahnuť dobrý VR zážitok bez obmedzenia na pohyb: vysoká obnovovacia frekvencia a okamžitá zmena zobrazenia pri zmene polohy hlavy. V našom riešení, scéna je vykresľovaná na vzdialenom výkonnom serveri do viacerých vrstiev, zabalená do jednej textúry, skomprimovaná a s potenciálnou viditeľnou množinou trojuholníkov posielaná klientovi. Klient zobrazuje scénu s vyššou obnovovaciou frekvenciou, ako je aktualizovana zo servera.